Computer

From Wikipedia, the free encyclopedia

A computer is a machine that manipulates data according to a list of instructions.

The first devices that resemble modern computers date to the mid-20th century (1940–1945), although the computer concept and various machines similar to computers existed earlier. Early electronic computers were the size of a large room, consuming as much power as several hundred modern personal computers (PC).[1] Modern computers are based on tiny integrated circuits and are millions to billions of times more capable while occupying a fraction of the space.[2] Today, simple computers may be made small enough to fit into a wristwatch and be powered from a watch battery. Personal computers, in various forms, are icons of the Information Age and are what most people think of as "a computer"; however, the most common form of computer in use today is the embedded computer. Embedded computers are small, simple devices that are used to control other devices—for example, they may be found in machines ranging from fighter aircraft to industrial robots, digital cameras, and children's toys.

The ability to store and execute lists of instructions called programs makes computers extremely versatile and distinguishes them from calculators. The Church–Turing thesis is a mathematical statement of this versatility: any computer with a certain minimum capability is, in principle, capable of performing the same tasks that any other computer can perform. Therefore, computers with capability and complexity ranging from that of a personal digital assistant to a supercomputer are all able to perform the same computational tasks given enough time and storage capacity.

History of computing

It is difficult to identify any one device as the earliest computer, partly because the term "computer" has been subject to varying interpretations over time. Originally, the term "computer" referred to a person who performed numerical calculations (a human computer), often with the aid of a mechanical calculating device.

The history of the modern computer begins with two separate technologies—that of automated calculation and that of programmability.

Examples of early mechanical calculating devices included the abacus, the slide rule and arguably the astrolabe and the Antikythera mechanism (which dates from about 150-100 BC). Hero of Alexandria (c. 10–70 AD) built a mechanical theater which performed a play lasting 10 minutes and was operated by a complex system of ropes and drums that might be considered to be a means of deciding which parts of the mechanism performed which actions and when.[3] This is the essence of programmability.

The "castle clock", an astronomical clock invented by Al-Jazari in 1206, is considered to be the earliest programmable analog computer.[4] It displayed the zodiac, the solar and lunar orbits, a crescent moon-shaped pointer travelling across a gateway causing automatic doors to open every hour,[5][6] and five robotic musicians who play music when struck by levers operated by a camshaft attached to a water wheel. The length of day and night could be re-programmed every day in order to account for the changing lengths of day and night throughout the year.[4]

The end of the Middle Ages saw a re-invigoration of European mathematics and engineering, and Wilhelm Schickard's 1623 device was the first of a number of mechanical calculators constructed by European engineers. However, none of those devices fit the modern definition of a computer because they could not be programmed.

In 1801, Joseph Marie Jacquard made an improvement to the textile loom that used a series of punched paper cards as a template to allow his loom to weave intricate patterns automatically. The resulting Jacquard loom was an important step in the development of computers because the use of punched cards to define woven patterns can be viewed as an early, albeit limited, form of programmability.

It was the fusion of automatic calculation with programmability that produced the first recognizable computers. In 1837, Charles Babbage was the first to conceptualize and design a fully programmable mechanical computer that he called "The Analytical Engine".[7] Due to limited finances, and an inability to resist tinkering with the design, Babbage never actually built his Analytical Engine.

Large-scale automated data processing of punched cards was performed for the U.S. Census in 1890 by tabulating machines designed by Herman Hollerith and manufactured by the Computing Tabulating Recording Corporation, which later became IBM. By the end of the 19th century a number of technologies that would later prove useful in the realization of practical computers had begun to appear: the punched card, Boolean algebra, the vacuum tube (thermionic valve) and the teleprinter.

During the first half of the 20th century, many scientific computing needs were met by increasingly sophisticated analog computers, which used a direct mechanical or electrical model of the problem as a basis for computation. However, these were not programmable and generally lacked the versatility and accuracy of modern digital computers.

George Stibitz is internationally recognized as a father of the modern digital computer. While working at Bell Labs in November of 1937, Stibitz invented and built a relay-based calculator he dubbed the "Model K" (for "kitchen table", on which he had assembled it), which was the first to use binary circuits to perform an arithmetic operation. Later models added greater sophistication including complex arithmetic and programmability.[8]

A succession of steadily more powerful and flexible computing devices were constructed in the 1930s and 1940s, gradually adding the key features that are seen in modern computers. The use of digital electronics (largely invented by Claude Shannon in 1937) and more flexible programmability were vitally important steps, but defining one point along this road as "the first digital electronic computer" is difficult (Shannon 1940). Notable achievements include:

EDSAC was one of the first computers to implement the stored program (

von Neumann) architecture.

Several developers of ENIAC, recognizing its flaws, came up with a far more flexible and elegant design, which came to be known as the "stored program architecture" or von Neumann architecture. This design was first formally described by John von Neumann in the paper First Draft of a Report on the EDVAC, distributed in 1945. A number of projects to develop computers based on the stored-program architecture commenced around this time, the first of these being completed in Great Britain. The first to be demonstrated working was the Manchester Small-Scale Experimental Machine (SSEM or "Baby"), while the EDSAC, completed a year after SSEM, was the first practical implementation of the stored program design. Shortly thereafter, the machine originally described by von Neumann's paper—EDVAC—was completed but did not see full-time use for an additional two years.

Nearly all modern computers implement some form of the stored-program architecture, making it the single trait by which the word "computer" is now defined. While the technologies used in computers have changed dramatically since the first electronic, general-purpose computers of the 1940s, most still use the von Neumann architecture.

Computers using vacuum tubes as their electronic elements were in use throughout the 1950s, but by the 1960s had been largely replaced by transistor-based machines, which were smaller, faster, cheaper to produce, required less power, and were more reliable. The first transistorised computer was demonstrated at the University of Manchester in 1953.[10] In the 1970s, integrated circuit technology and the subsequent creation of microprocessors, such as the Intel 4004, further decreased size and cost and further increased speed and reliability of computers. By the 1980s, computers became sufficiently small and cheap to replace simple mechanical controls in domestic appliances such as washing machines. The 1980s also witnessed home computers and the now ubiquitous personal computer. With the evolution of the Internet, personal computers are becoming as common as the television and the telephone in the household.

Modern smartphones are fully-programmable computers in their own right, in a technical sense, and as of 2009 may well be the most common form of such computers in existence.

Stored program architecture

The defining feature of modern computers which distinguishes them from all other machines is that they can be programmed. That is to say that a list of instructions (the program) can be given to the computer and it will store them and carry them out at some time in the future.

In most cases, computer instructions are simple: add one number to another, move some data from one location to another, send a message to some external device, etc. These instructions are read from the computer's memory and are generally carried out (executed) in the order they were given. However, there are usually specialized instructions to tell the computer to jump ahead or backwards to some other place in the program and to carry on executing from there. These are called "jump" instructions (or branches). Furthermore, jump instructions may be made to happen conditionally so that different sequences of instructions may be used depending on the result of some previous calculation or some external event. Many computers directly support subroutines by providing a type of jump that "remembers" the location it jumped from and another instruction to return to the instruction following that jump instruction.

Program execution might be likened to reading a book. While a person will normally read each word and line in sequence, they may at times jump back to an earlier place in the text or skip sections that are not of interest. Similarly, a computer may sometimes go back and repeat the instructions in some section of the program over and over again until some internal condition is met. This is called the flow of control within the program and it is what allows the computer to perform tasks repeatedly without human intervention.

Comparatively, a person using a pocket calculator can perform a basic arithmetic operation such as adding two numbers with just a few button presses. But to add together all of the numbers from 1 to 1,000 would take thousands of button presses and a lot of time—with a near certainty of making a mistake. On the other hand, a computer may be programmed to do this with just a few simple instructions. For example:

mov #0,sum ; set sum to 0

mov #1,num ; set num to 1

loop: add num,sum ; add num to sum

add #1,num ; add 1 to num

cmp num,#1000 ; compare num to 1000

ble loop ; if num <= 1000, go back to 'loop'

halt ; end of program. stop running

Once told to run this program, the computer will perform the repetitive addition task without further human intervention. It will almost never make a mistake and a modern PC can complete the task in about a millionth of a second.[11]

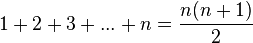

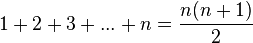

However, computers cannot "think" for themselves in the sense that they only solve problems in exactly the way they are programmed to. An intelligent human faced with the above addition task might soon realize that instead of actually adding up all the numbers one can simply use the equation

and arrive at the correct answer (500,500) with little work.[12] In other words, a computer programmed to add up the numbers one by one as in the example above would do exactly that without regard to efficiency or alternative solutions.

Programs

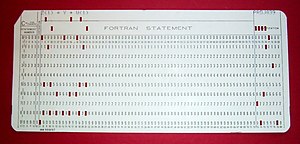

A 1970s

punched card containing one line from a

FORTRAN program. The card reads: "Z(1) = Y + W(1)" and is labelled "PROJ039" for identification purposes.

In practical terms, a computer program may run from just a few instructions to many millions of instructions, as in a program for a word processor or a web browser. A typical modern computer can execute billions of instructions per second (gigahertz or GHz) and rarely make a mistake over many years of operation. Large computer programs comprising several million instructions may take teams of programmers years to write, thus the probability of the entire program having been written without error is highly unlikely.

Errors in computer programs are called "bugs". Bugs may be benign and not affect the usefulness of the program, or have only subtle effects. But in some cases they may cause the program to "hang" - become unresponsive to input such as mouse clicks or keystrokes, or to completely fail or "crash". Otherwise benign bugs may sometimes may be harnessed for malicious intent by an unscrupulous user writing an "exploit" - code designed to take advantage of a bug and disrupt a program's proper execution. Bugs are usually not the fault of the computer. Since computers merely execute the instructions they are given, bugs are nearly always the result of programmer error or an oversight made in the program's design.[13]

In most computers, individual instructions are stored as machine code with each instruction being given a unique number (its operation code or opcode for short). The command to add two numbers together would have one opcode, the command to multiply them would have a different opcode and so on. The simplest computers are able to perform any of a handful of different instructions; the more complex computers have several hundred to choose from—each with a unique numerical code. Since the computer's memory is able to store numbers, it can also store the instruction codes. This leads to the important fact that entire programs (which are just lists of instructions) can be represented as lists of numbers and can themselves be manipulated inside the computer just as if they were numeric data. The fundamental concept of storing programs in the computer's memory alongside the data they operate on is the crux of the von Neumann, or stored program, architecture. In some cases, a computer might store some or all of its program in memory that is kept separate from the data it operates on. This is called the Harvard architecture after the Harvard Mark I computer. Modern von Neumann computers display some traits of the Harvard architecture in their designs, such as in CPU caches.

While it is possible to write computer programs as long lists of numbers (machine language) and this technique was used with many early computers,[14] it is extremely tedious to do so in practice, especially for complicated programs. Instead, each basic instruction can be given a short name that is indicative of its function and easy to remember—a mnemonic such as ADD, SUB, MULT or JUMP. These mnemonics are collectively known as a computer's assembly language. Converting programs written in assembly language into something the computer can actually understand (machine language) is usually done by a computer program called an assembler. Machine languages and the assembly languages that represent them (collectively termed low-level programming languages) tend to be unique to a particular type of computer. For instance, an ARM architecture computer (such as may be found in a PDA or a hand-held videogame) cannot understand the machine language of an Intel Pentium or the AMD Athlon 64 computer that might be in a PC.[15]

Though considerably easier than in machine language, writing long programs in assembly language is often difficult and error prone. Therefore, most complicated programs are written in more abstract high-level programming languages that are able to express the needs of the computer programmer more conveniently (and thereby help reduce programmer error). High level languages are usually "compiled" into machine language (or sometimes into assembly language and then into machine language) using another computer program called a compiler.[16] Since high level languages are more abstract than assembly language, it is possible to use different compilers to translate the same high level language program into the machine language of many different types of computer. This is part of the means by which software like video games may be made available for different computer architectures such as personal computers and various video game consoles.

The task of developing large software systems is an immense intellectual effort. Producing software with an acceptably high reliability on a predictable schedule and budget has proved historically to be a great challenge; the academic and professional discipline of software engineering concentrates specifically on this problem.

Example

A traffic light showing red.

Suppose a computer is being employed to drive a traffic signal at an intersection between two streets. The computer has the following three basic instructions.

- ON(Streetname, Color) Turns the light on Streetname with a specified Color on.

- OFF(Streetname, Color) Turns the light on Streetname with a specified Color off.

- WAIT(Seconds) Waits a specifed number of seconds.

- START Starts the program

- REPEAT Tells the computer to repeat a specified part of the program in a loop.

Comments are marked with a // on the left margin. Assume the streetnames are Broadway and Main.

START

//Let Broadway traffic go

OFF(Broadway, Red)

ON(Broadway, Green)

WAIT(60 seconds)

//Stop Broadway traffic

OFF(Broadway, Green)

ON(Broadway, Yellow)

WAIT(3 seconds)

OFF(Broadway, Yellow)

ON(Broadway, Red)

//Let Main traffic go

OFF(Main, Red)

ON(Main, Green)

WAIT(60 seconds)

//Stop Main traffic

OFF(Main, Green)

ON(Main, Yellow)

WAIT(3 seconds)

OFF(Main, Yellow)

ON(Main, Red)

//Tell computer to continuously repeat the program.

REPEAT ALL

With this set of instructions, the computer would cycle the light continually through red, green, yellow and back to red again on both streets.

However, suppose there is a simple on/off switch connected to the computer that is intended to be used to make the light flash red while some maintenance operation is being performed. The program might then instruct the computer to:

START

IF Switch == OFF then: //Normal traffic signal operation

{

//Let Broadway traffic go

OFF(Broadway, Red)

ON(Broadway, Green)

WAIT(60 seconds)

//Stop Broadway traffic

OFF(Broadway, Green)

ON(Broadway, Yellow)

WAIT(3 seconds)

OFF(Broadway, Yellow)

ON(Broadway, Red)

//Let Main traffic go

OFF(Main, Red)

ON(Main, Green)

WAIT(60 seconds)

//Stop Main traffic

OFF(Main, Green)

ON(Main, Yellow)

WAIT(3 seconds)

OFF(Main, Yellow)

ON(Main, Red)

//Tell the computer to repeat this section continuously.

REPEAT THIS SECTION

}

IF Switch == ON THEN: //Maintenance Mode

{

//Turn the red lights on and wait 1 second.

ON(Broadway, Red)

ON(Main, Red)

WAIT(1 second)

//Turn the red lights off and wait 1 second.

OFF(Broadway, Red)

OFF(Main, Red)

WAIT(1 second)

//Tell the comptuer to repeat the statements in this section.

REPEAT THIS SECTION

}

In this manner, the traffic signal will run a flash-red program when the switch is on, and will run the normal program when the switch is off. Both of these program examples show the basic layout of a computer program in a simple, familiar context of a traffic signal. Any experienced programmer can spot many software bugs in the program, for instance, not making sure that the green light is off when the switch is set to flash red. However, to remove all possible bugs would make this program much longer and more complicated, and would be confusing to nontechnical readers: the aim of this example is a simple demonstration of how computer instructions are laid out.

How computers work

A general purpose computer has four main sections: the arithmetic and logic unit (ALU), the control unit, the memory, and the input and output devices (collectively termed I/O). These parts are interconnected by busses, often made of groups of wires.

The control unit, ALU, registers, and basic I/O (and often other hardware closely linked with these) are collectively known as a central processing unit (CPU). Early CPUs were composed of many separate components but since the mid-1970s CPUs have typically been constructed on a single integrated circuit called a microprocessor.

Control unit

The control unit (often called a control system or central controller) directs the various components of a computer. It reads and interprets (decodes) instructions in the program one by one. The control system decodes each instruction and turns it into a series of control signals that operate the other parts of the computer.[17] Control systems in advanced computers may change the order of some instructions so as to improve performance.

A key component common to all CPUs is the program counter, a special memory cell (a register) that keeps track of which location in memory the next instruction is to be read from.[18]

Diagram showing how a particular

MIPS architecture instruction would be decoded by the control system.

The control system's function is as follows—note that this is a simplified description, and some of these steps may be performed concurrently or in a different order depending on the type of CPU:

- Read the code for the next instruction from the cell indicated by the program counter.

- Decode the numerical code for the instruction into a set of commands or signals for each of the other systems.

- Increment the program counter so it points to the next instruction.

- Read whatever data the instruction requires from cells in memory (or perhaps from an input device). The location of this required data is typically stored within the instruction code.

- Provide the necessary data to an ALU or register.

- If the instruction requires an ALU or specialized hardware to complete, instruct the hardware to perform the requested operation.

- Write the result from the ALU back to a memory location or to a register or perhaps an output device.

- Jump back to step (1).

Since the program counter is (conceptually) just another set of memory cells, it can be changed by calculations done in the ALU. Adding 100 to the program counter would cause the next instruction to be read from a place 100 locations further down the program. Instructions that modify the program counter are often known as "jumps" and allow for loops (instructions that are repeated by the computer) and often conditional instruction execution (both examples of control flow).

It is noticeable that the sequence of operations that the control unit goes through to process an instruction is in itself like a short computer program—and indeed, in some more complex CPU designs, there is another yet smaller computer called a microsequencer that runs a microcode program that causes all of these events to happen.

Arithmetic/logic unit (ALU)

The ALU is capable of performing two classes of operations: arithmetic and logic.[19]

The set of arithmetic operations that a particular ALU supports may be limited to adding and subtracting or might include multiplying or dividing, trigonometry functions (sine, cosine, etc) and square roots. Some can only operate on whole numbers (integers) whilst others use floating point to represent real numbers—albeit with limited precision. However, any computer that is capable of performing just the simplest operations can be programmed to break down the more complex operations into simple steps that it can perform. Therefore, any computer can be programmed to perform any arithmetic operation—although it will take more time to do so if its ALU does not directly support the operation. An ALU may also compare numbers and return boolean truth values (true or false) depending on whether one is equal to, greater than or less than the other ("is 64 greater than 65?").

Logic operations involve Boolean logic: AND, OR, XOR and NOT. These can be useful both for creating complicated conditional statements and processing boolean logic.

Superscalar computers may contain multiple ALUs so that they can process several instructions at the same time.[20] Graphics processors and computers with SIMD and MIMD features often provide ALUs that can perform arithmetic on vectors and matrices.

Memory

Magnetic core memory was popular main memory for computers through the 1960s until it was completely replaced by semiconductor memory.

A computer's memory can be viewed as a list of cells into which numbers can be placed or read. Each cell has a numbered "address" and can store a single number. The computer can be instructed to "put the number 123 into the cell numbered 1357" or to "add the number that is in cell 1357 to the number that is in cell 2468 and put the answer into cell 1595". The information stored in memory may represent practically anything. Letters, numbers, even computer instructions can be placed into memory with equal ease. Since the CPU does not differentiate between different types of information, it is up to the software to give significance to what the memory sees as nothing but a series of numbers.

In almost all modern computers, each memory cell is set up to store binary numbers in groups of eight bits (called a byte). Each byte is able to represent 256 different numbers; either from 0 to 255 or -128 to +127. To store larger numbers, several consecutive bytes may be used (typically, two, four or eight). When negative numbers are required, they are usually stored in two's complement notation. Other arrangements are possible, but are usually not seen outside of specialized applications or historical contexts. A computer can store any kind of information in memory as long as it can be somehow represented in numerical form. Modern computers have billions or even trillions of bytes of memory.

The CPU contains a special set of memory cells called registers that can be read and written to much more rapidly than the main memory area. There are typically between two and one hundred registers depending on the type of CPU. Registers are used for the most frequently needed data items to avoid having to access main memory every time data is needed. Since data is constantly being worked on, reducing the need to access main memory (which is often slow compared to the ALU and control units) greatly increases the computer's speed.

Computer main memory comes in two principal varieties: random access memory or RAM and read-only memory or ROM. RAM can be read and written to anytime the CPU commands it, but ROM is pre-loaded with data and software that never changes, so the CPU can only read from it. ROM is typically used to store the computer's initial start-up instructions. In general, the contents of RAM is erased when the power to the computer is turned off while ROM retains its data indefinitely. In a PC , the ROM contains a specialized program called the BIOS that orchestrates loading the computer's operating system from the hard disk drive into RAM whenever the computer is turned on or reset. In embedded computers, which frequently do not have disk drives, all of the software required to perform the task may be stored in ROM. Software that is stored in ROM is often called firmware because it is notionally more like hardware than software. Flash memory blurs the distinction between ROM and RAM by retaining data when turned off but being rewritable like RAM. However, flash memory is typically much slower than conventional ROM and RAM so its use is restricted to applications where high speeds are not required.[21]

In more sophisticated computers there may be one or more RAM cache memories which are slower than registers but faster than main memory. Generally computers with this sort of cache are designed to move frequently needed data into the cache automatically, often without the need for any intervention on the programmer's part.

Input/output (I/O)

Main article:

Input/output

Hard disks are common I/O devices used with computers.

I/O is the means by which a computer exchanges information with the outside world.[22] Devices that provide input or output to the computer are called peripherals.[23] On a typical personal computer, peripherals include input devices like the keyboard and mouse, and output devices such as the display and printer. Hard disk drives, floppy disk drives and optical disc drives serve as both input and output devices. Computer networking is another form of I/O.

Often, I/O devices are complex computers in their own right with their own CPU and memory. A graphics processing unit might contain fifty or more tiny computers that perform the calculations necessary to display 3D graphics[citation needed]. Modern desktop computers contain many smaller computers that assist the main CPU in performing I/O.

Multitasking

While a computer may be viewed as running one gigantic program stored in its main memory, in some systems it is necessary to give the appearance of running several programs simultaneously. This is achieved by multitasking i.e. having the computer switch rapidly between running each program in turn.[24]

One means by which this is done is with a special signal called an interrupt which can periodically cause the computer to stop executing instructions where it was and do something else instead. By remembering where it was executing prior to the interrupt, the computer can return to that task later. If several programs are running "at the same time", then the interrupt generator might be causing several hundred interrupts per second, causing a program switch each time. Since modern computers typically execute instructions several orders of magnitude faster than human perception, it may appear that many programs are running at the same time even though only one is ever executing in any given instant. This method of multitasking is sometimes termed "time-sharing" since each program is allocated a "slice" of time in turn.[25]

Before the era of cheap computers, the principle use for multitasking was to allow many people to share the same computer.

Seemingly, multitasking would cause a computer that is switching between several programs to run more slowly - in direct proportion to the number of programs it is running. However, most programs spend much of their time waiting for slow input/output devices to complete their tasks. If a program is waiting for the user to click on the mouse or press a key on the keyboard, then it will not take a "time slice" until the event it is waiting for has occurred. This frees up time for other programs to execute so that many programs may be run at the same time without unacceptable speed loss.

Multiprocessing

Cray designed many supercomputers that used multiprocessing heavily.

Some computers may divide their work between one or more separate CPUs, creating a multiprocessing configuration. Traditionally, this technique was utilized only in large and powerful computers such as supercomputers, mainframe computers and servers. However, multiprocessor and multi-core (multiple CPUs on a single integrated circuit) personal and laptop computers have become widely available and are beginning to see increased usage in lower-end markets as a result.

Supercomputers in particular often have highly unique architectures that differ significantly from the basic stored-program architecture and from general purpose computers.[26] They often feature thousands of CPUs, customized high-speed interconnects, and specialized computing hardware. Such designs tend to be useful only for specialized tasks due to the large scale of program organization required to successfully utilize most of the available resources at once. Supercomputers usually see usage in large-scale simulation, graphics rendering, and cryptography applications, as well as with other so-called "embarrassingly parallel" tasks.

Networking and the Internet

Visualization of a portion of the

routes on the Internet.

Computers have been used to coordinate information between multiple locations since the 1950s. The U.S. military's SAGE system was the first large-scale example of such a system, which led to a number of special-purpose commercial systems like Sabre.[27]

In the 1970s, computer engineers at research institutions throughout the United States began to link their computers together using telecommunications technology. This effort was funded by ARPA (now DARPA), and the computer network that it produced was called the ARPANET.[28] The technologies that made the Arpanet possible spread and evolved.

In time, the network spread beyond academic and military institutions and became known as the Internet. The emergence of networking involved a redefinition of the nature and boundaries of the computer. Computer operating systems and applications were modified to include the ability to define and access the resources of other computers on the network, such as peripheral devices, stored information, and the like, as extensions of the resources of an individual computer. Initially these facilities were available primarily to people working in high-tech environments, but in the 1990s the spread of applications like e-mail and the World Wide Web, combined with the development of cheap, fast networking technologies like Ethernet and ADSL saw computer networking become almost ubiquitous. In fact, the number of computers that are networked is growing phenomenally. A very large proportion of personal computers regularly connect to the Internet to communicate and receive information. "Wireless" networking, often utilizing mobile phone networks, has meant networking is becoming increasingly ubiquitous even in mobile computing environments.

Further topics

Hardware

The term hardware covers all of those parts of a computer that are tangible objects. Circuits, displays, power supplies, cables, keyboards, printers and mice are all hardware.

History of computing hardware | First Generation (Mechanical/Electromechanical) | Calculators | Antikythera mechanism, Difference Engine, Norden bombsight |

| Programmable Devices | Jacquard loom, Analytical Engine, Harvard Mark I, Z3 |

| Second Generation (Vacuum Tubes) | Calculators | Atanasoff–Berry Computer, IBM 604, UNIVAC 60, UNIVAC 120 |

| Programmable Devices | Colossus, ENIAC, Manchester Small-Scale Experimental Machine, EDSAC, Manchester Mark 1, CSIRAC, EDVAC, UNIVAC I, IBM 701, IBM 702, IBM 650, Z22 |

| Third Generation (Discrete transistors and SSI, MSI, LSI Integrated circuits) | Mainframes | IBM 7090, IBM 7080, System/360, BUNCH |

| Minicomputer | PDP-8, PDP-11, System/32, System/36 |

| Fourth Generation (VLSI integrated circuits) | Minicomputer | VAX, IBM System i |

| 4-bit microcomputer | Intel 4004, Intel 4040 |

| 8-bit microcomputer | Intel 8008, Intel 8080, Motorola 6800, Motorola 6809, MOS Technology 6502, Zilog Z80 |

| 16-bit microcomputer | Intel 8088, Zilog Z8000, WDC 65816/65802 |

| 32-bit microcomputer | Intel 80386, Pentium, Motorola 68000, ARM architecture |

| 64-bit microcomputer[29] | Alpha, MIPS, PA-RISC, PowerPC, SPARC, x86-64 |

| Embedded computer | Intel 8048, Intel 8051 |

| Personal computer | Desktop computer, Home computer, Laptop computer, Personal digital assistant (PDA), Portable computer, Tablet computer, Wearable computer |

| Theoretical/experimental | Quantum computer, Chemical computer, DNA computing, Optical computer, Spintronics based computer |

Other Hardware Topics | Peripheral device (Input/output) | Input | Mouse, Keyboard, Joystick, Image scanner |

| Output | Monitor, Printer |

| Both | Floppy disk drive, Hard disk, Optical disc drive, Teleprinter |

| Computer busses | Short range | RS-232, SCSI, PCI, USB |

| Long range (Computer networking) | Ethernet, ATM, FDDI |

Software

Software refers to parts of the computer which do not have a material form, such as programs, data, protocols, etc. When software is stored in hardware that cannot easily be modified (such as BIOS ROM in an IBM PC compatible), it is sometimes called "firmware" to indicate that it falls into an uncertain area somewhere between hardware and software.

Computer software | Operating system | Unix and BSD | UNIX System V, AIX, HP-UX, Solaris (SunOS), IRIX, List of BSD operating systems |

| GNU/Linux | List of Linux distributions, Comparison of Linux distributions |

| Microsoft Windows | Windows 95, Windows 98, Windows NT, Windows 2000, Windows XP, Windows Vista, Windows CE |

| DOS | 86-DOS (QDOS), PC-DOS, MS-DOS, FreeDOS |

| Mac OS | Mac OS classic, Mac OS X |

| Embedded and real-time | List of embedded operating systems |

| Experimental | Amoeba, Oberon/Bluebottle, Plan 9 from Bell Labs |

| Library | Multimedia | DirectX, OpenGL, OpenAL |

| Programming library | C standard library, Standard template library |

| Data | Protocol | TCP/IP, Kermit, FTP, HTTP, SMTP |

| File format | HTML, XML, JPEG, MPEG, PNG |

| User interface | Graphical user interface (WIMP) | Microsoft Windows, GNOME, KDE, QNX Photon, CDE, GEM |

| Text-based user interface | Command-line interface, Text user interface |

| Application | Office suite | Word processing, Desktop publishing, Presentation program, Database management system, Scheduling & Time management, Spreadsheet, Accounting software |

| Internet Access | Browser, E-mail client, Web server, Mail transfer agent, Instant messaging |

| Design and manufacturing | Computer-aided design, Computer-aided manufacturing, Plant management, Robotic manufacturing, Supply chain management |

| Graphics | Raster graphics editor, Vector graphics editor, 3D modeler, Animation editor, 3D computer graphics, Video editing, Image processing |

| Audio | Digital audio editor, Audio playback, Mixing, Audio synthesis, Computer music |

| Software Engineering | Compiler, Assembler, Interpreter, Debugger, Text Editor, Integrated development environment, Performance analysis, Revision control, Software configuration management |

| Educational | Edutainment, Educational game, Serious game, Flight simulator |

| Games | Strategy, Arcade, Puzzle, Simulation, First-person shooter, Platform, Massively multiplayer, Interactive fiction |

| Misc | Artificial intelligence, Antivirus software, Malware scanner, Installer/Package management systems, File manager |

Programming languages

Programming languages provide various ways of specifying programs for computers to run. Unlike natural languages, programming languages are designed to permit no ambiguity and to be concise. They are purely written languages and are often difficult to read aloud. They are generally either translated into machine language by a compiler or an assembler before being run, or translated directly at run time by an interpreter. Sometimes programs are executed by a hybrid method of the two techniques. There are thousands of different programming languages—some intended to be general purpose, others useful only for highly specialized applications.

Programming Languages | Lists of programming languages | Timeline of programming languages, Categorical list of programming languages, Generational list of programming languages, Alphabetical list of programming languages, Non-English-based programming languages |

| Commonly used Assembly languages | ARM, MIPS, x86 |

| Commonly used High level languages | Ada, BASIC, C, C++, C#, COBOL, Fortran, Java, Lisp, Pascal, Object Pascal |

| Commonly used Scripting languages | Bourne script, JavaScript, Python, Ruby, PHP, Perl |

Professions and organizations

As the use of computers has spread throughout society, there are an increasing number of careers involving computers. Following the theme of hardware, software and firmware, the brains of people who work in the industry are sometimes known irreverently as wetware or "meatware".

Computer-related professions | Hardware-related | Electrical engineering, Electronics engineering, Computer engineering, Telecommunications engineering, Optical engineering, Nanoscale engineering |

| Software-related | Computer science, Human-computer interaction, Information technology, Software engineering, Scientific computing, Web design, Desktop publishing |

The need for computers to work well together and to be able to exchange information has spawned the need for many standards organizations, clubs and societies of both a formal and informal nature.

Organizations | Standards groups | ANSI, IEC, IEEE, IETF, ISO, W3C |

| Professional Societies | ACM, ACM Special Interest Groups, IET, IFIP |

| Free/Open source software groups | Free Software Foundation, Mozilla Foundation, Apache Software Foundation |

See also

External links

Notes

- ^ In 1946, ENIAC consumed an estimated 174 kW. By comparison, a typical personal computer may use around 400 W; over four hundred times less. (Kempf 1961)

- ^ Early computers such as Colossus and ENIAC were able to process between 5 and 100 operations per second. A modern "commodity" microprocessor (as of 2007) can process billions of operations per second, and many of these operations are more complicated and useful than early computer operations.

- ^ "Heron of Alexandria". http://www.mlahanas.de/Greeks/HeronAlexandria2.htm. Retrieved on 2008-01-15.

- ^ a b Ancient Discoveries, Episode 11: Ancient Robots, History Channel, http://www.youtube.com/watch?v=rxjbaQl0ad8, retrieved on 2008-09-06

- ^ Howard R. Turner (1997), Science in Medieval Islam: An Illustrated Introduction, p. 184, University of Texas Press, ISBN 0292781490

- ^ Donald Routledge Hill, "Mechanical Engineering in the Medieval Near East", Scientific American, May 1991, pp. 64-9 (cf. Donald Routledge Hill, Mechanical Engineering)

- ^ The Analytical Engine should not be confused with Babbage's difference engine which was a non-programmable mechanical calculator.

- ^ "Inventor Profile: George R. Stibitz". National Inventors Hall of Fame Foundation, Inc.. http://www.invent.org/hall_of_fame/140.html.

- ^ B. Jack Copeland, ed., Colossus: The Secrets of Bletchley Park's Codebreaking Computers, Oxford University Press, 2006

- ^ Lavington 1998, p. 37

- ^ This program was written similarly to those for the PDP-11 minicomputer and shows some typical things a computer can do. All the text after the semicolons are comments for the benefit of human readers. These have no significance to the computer and are ignored. (Digital Equipment Corporation 1972)

- ^ Attempts are often made to create programs that can overcome this fundamental limitation of computers. Software that mimics learning and adaptation is part of artificial intelligence.

- ^ It is not universally true that bugs are solely due to programmer oversight. Computer hardware may fail or may itself have a fundamental problem that produces unexpected results in certain situations. For instance, the Pentium FDIV bug caused some Intel microprocessors in the early 1990s to produce inaccurate results for certain floating point division operations. This was caused by a flaw in the microprocessor design and resulted in a partial recall of the affected devices.

- ^ Even some later computers were commonly programmed directly in machine code. Some minicomputers like the DEC PDP-8 could be programmed directly from a panel of switches. However, this method was usually used only as part of the booting process. Most modern computers boot entirely automatically by reading a boot program from some non-volatile memory.

- ^ However, there is sometimes some form of machine language compatibility between different computers. An x86-64 compatible microprocessor like the AMD Athlon 64 is able to run most of the same programs that an Intel Core 2 microprocessor can, as well as programs designed for earlier microprocessors like the Intel Pentiums and Intel 80486. This contrasts with very early commercial computers, which were often one-of-a-kind and totally incompatible with other computers.

- ^ High level languages are also often interpreted rather than compiled. Interpreted languages are translated into machine code on the fly by another program called an interpreter.

- ^ The control unit's rule in interpreting instructions has varied somewhat in the past. While the control unit is solely responsible for instruction interpretation in most modern computers, this is not always the case. Many computers include some instructions that may only be partially interpreted by the control system and partially interpreted by another device. This is especially the case with specialized computing hardware that may be partially self-contained. For example, EDVAC, the first modern stored program computer to be designed, used a central control unit that only interpreted four instructions. All of the arithmetic-related instructions were passed on to its arithmetic unit and further decoded there.

- ^ Instructions often occupy more than one memory address, so the program counters usually increases by the number of memory locations required to store one instruction.

- ^ David J. Eck (2000). The Most Complex Machine: A Survey of Computers and Computing. A K Peters, Ltd.. p. 54. ISBN 9781568811284.

- ^ Erricos John Kontoghiorghes (2006). Handbook of Parallel Computing and Statistics. CRC Press. p. 45. ISBN 9780824740672.

- ^ Flash memory also may only be rewritten a limited number of times before wearing out, making it less useful for heavy random access usage. (Verma 1988)

- ^ Donald Eadie (1968). Introduction to the Basic Computer. Prentice-Hall. p. 12.

- ^ Arpad Barna; Dan I. Porat (1976). Introduction to Microcomputers and the Microprocessors. Wiley. p. 85. ISBN 9780471050513.

- ^ Jerry Peek; Grace Todino, John Strang (2002). Learning the UNIX Operating System: A Concise Guide for the New User. O'Reilly. p. 130. ISBN 9780596002619.

- ^ Gillian M. Davis (2002). Noise Reduction in Speech Applications. CRC Press. p. 111. ISBN 9780849309496.

- ^ However, it is also very common to construct supercomputers out of many pieces of cheap commodity hardware; usually individual computers connected by networks. These so-called computer clusters can often provide supercomputer performance at a much lower cost than customized designs. While custom architectures are still used for most of the most powerful supercomputers, there has been a proliferation of cluster computers in recent years. (TOP500 2006)

- ^ Agatha C. Hughes (2000). Systems, Experts, and Computers. MIT Press. p. 161. ISBN 9780262082853. "The experience of SAGE helped make possible the first truly large-scale commercial real-time network: the SABRE computerized airline reservations system..."

- ^ "A Brief History of the Internet". Internet Society. http://www.isoc.org/internet/history/brief.shtml. Retrieved on 2008-09-20.

- ^ Most major 64-bit instruction set architectures are extensions of earlier designs. All of the architectures listed in this table, except for Alpha, existed in 32-bit forms before their 64-bit incarnations were introduced.